Toward Better Judgments

Addressing the Noise Factor in Decision-Making

How can we ensure that our judgments and decisions are more consistent, and more consistently on target?

In an ideal world, judges would all use the same reasoning to come to fair verdicts for everyone. Regardless of race, gender, age or any other consideration, we could stand before any judge and be confident of receiving the same judgment, the same sentence.

In our world, of course, this is a pipe dream. Even in our personal lives, we aren’t always consistent in the judgments we make.

What is it that gets in the way?

Logic and emotion are often seen as competing actors in judgment and decision-making, with logic taking the starring role. Emotion is blamed for unpleasant “drama,” to be ruled over by logic at all costs. But those who remember Star Trek’s Vulcan character, Mr. Spock, might understand why the use of logic alone can lead to troublesome—or humorous—misunderstandings. Of course, Spock himself (striving always to be purely logical) wouldn’t actually get the humor of his own misunderstandings. But in all seriousness, there isn’t much humor in being bereft of emotion.

One of the findings that came out of the 1990s (“the decade of the brain”) was the news that we don’t function very well on logic alone. In fact, wrote neuroscientist Antonio Damasio in his 1994 book Descartes’ Error, “When emotion is entirely left out of the reasoning picture, as happens in certain neurological conditions, reason turns out to be even more flawed than when emotion plays bad tricks on our decisions.”

It’s well known that the body holds memories of emotional experiences such as trauma. Damasio suggests that somatic markers—emotional experiences stored as tags in the body so we can make decisions more quickly in future situations—may help give us the nudge we need after we’ve done all our cold calculations but still have alternatives to consider. Perhaps this is when such emotional responses as empathy, mercy and compassion also come into play.

Descartes’ error, Damasio wrote, was that he imagined a clear division between the mind and the body: “I think, therefore I am.” But viewing the body and the brain as discrete systems limits our understanding of the whole picture, including the vital role of emotion in decision-making. Damasio asks us to avoid making the same mistake as Descartes.

Let’s imagine, then, that both logic and emotion are in play as we examine two other sources of error that have a profound impact on judgment and decision-making.

The first, psychological bias, is familiar to most of us. It’s the downside of the brain’s marvelous ability to substitute a simple question for a harder one so we can make quick decisions based on experience. These shortcuts are called heuristics. But while heuristics are helpful in many situations, they also have the potential to misdirect our decisions, beliefs and behaviors, and they can lead to bias errors when we aren’t on our toes.

In his 2011 book Thinking, Fast and Slow, Nobel Prize–winning psychologist Daniel Kahneman explained many of the ways bias affects both our faster “System 1” thinking (which is primarily intuitive) and our slower “System 2” thinking (the more methodical, attention-focused system).

Writing ten years later with researchers Olivier Sibony and Cass Sunstein, Kahneman looks at a second impediment to sound judgment and decision-making, which is also affected by psychological biases; that is, noise. In decision-making, noise is the variability in human judgment from one person or situation to the next, where it’s important to reach consistent and equitable outcomes. Bias and noise are the error twins when it comes to making decisions—the bumps in the road that can knock us off the target of sound judgment.

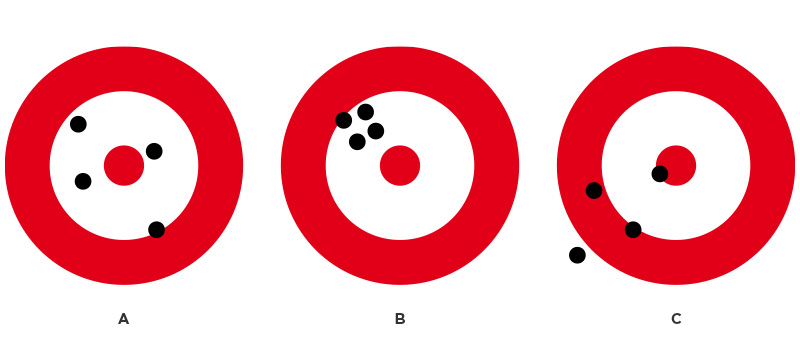

It’s important not to confuse the term noise with sources of noise in decision-making—mood, distractions, psychological biases, all of which certainly lead to inconsistent judgments. Noise describes the variability itself. Kahneman illustrates this using the example of teams in target practice. If an entire team misses toward the same side of the target, the team is statistically biased. We can predict the next shot will go off to the same side as all the others. If the shots are scattered all over the target, the team is statistically “noisy.” The next shot could hit anywhere. In the end, both bias and noise represent error; a winning team would ideally be hitting the bull’s-eye.

The target labeled “A” depicts noise in decision-making; “B” depicts bias; and “C” shows noise and bias.

Adapted from Noise: A Flaw in Human Judgment by Daniel Kahneman, Olivier Sibony and Cass R. Sunstein

The Problem With Noise

Doctors making diagnoses, teachers assigning grades, companies hiring or promoting; these are all examples of areas where fickle judgments (noise) can have lasting and sometimes devastating consequences for the person being evaluated. But perhaps the clearest example is the courtroom. The entire judicial system—in whatever culture one may live—is bound to be an area where there is great variability, or noise, in human judgment. Surprisingly, it even occurs in areas widely assumed to be noiseless, such as fingerprint or blood-spatter analysis.

“It would be outrageous if three similar people, convicted of the same crime, received radically different penalties. . . . And yet that outrage can be found in many nations—not only in the distant past but also today.”

This makes sense when we remember that each “judge”—from the arresting officer to the person appointed to sit on the bench—operates with a different set of experiences and psychological biases. These biases, along with random factors that might include mood or even the weather, can all contribute to noise.

Kahneman and his colleagues find two broad types of noise reflected in judgments by one judge across multiple cases, as well as in judgments between individuals judging the same case.

“As any defense lawyer will tell you,” writes Kahneman’s research team, “judges have reputations, some for being harsh ‘hanging judges,’ who are more severe than the average judge, and others for being ‘bleeding-heart judges,’ who are more lenient than the average judge. We refer to these deviations as level errors,” and to the variability in these errors as level noise.

In the case of harsh judges, level noise suggests that they would be equally severe in each of their cases. This doesn’t happen, however. They can be harsher than their own personal average in some cases and more lenient than their personal average in others. This is called pattern noise.

Random influences can contribute to pattern noise, too; for instance, a judge who is generally harsh in sentencing may go lighter on someone who reminds him of his daughter. And to add yet another layer of complexity, if the judge is in a better mood than usual because his daughter won an award at school, he may be even more lenient on the offender who looks like her than he might otherwise have been. These variables constitute a subset of pattern noise and are referred to as occasion noise, which, according to Kahneman, “affects all our judgments, all the time.”

One of the most-studied sources of occasion noise is mood. Perhaps unsurprisingly, when we’re in a good mood, we’re usually more approving, positive and helpful, and less likely to challenge our first impressions. We also interpret the behavior of others through rosier lenses. As you might imagine, the reverse is true when we’re in a bad mood.

Mood influences a lot of what we think and notice, and how we make judgments. It influences our gullibility as well—how prone we are to being taken in by impressive-sounding but nonsensical ideas. Behavioral scientists who study this quality combine random buzzwords and ask subjects to rate the profoundness of sentences like these: “Hidden meaning transforms unparalleled abstract beauty,” or “Imagination is inside exponential space time events.” In one study, using similar pseudo-profundity, researcher Joseph Forgas found that people who are in a good mood are more likely to see meaning in gibberish texts, and less likely to detect deception or manipulation.

So if you can put people in a good mood, you make them more receptive to nonsense and generally more easily persuaded. “Conversely,” Kahneman says, “eyewitnesses who are exposed to misleading information are better able to disregard it—and to avoid false testimony—when they are in a bad mood.”

“Your judgment depends on what mood you are in, what cases you have just discussed, and even what the weather is. You are not the same person at all times.”

In a courtroom, then, you may want eyewitnesses to be in a bad mood so they don’t testify falsely against you; but you’d hope the jury is in a good mood so they’re less likely to find you guilty. For the good of society, however, instead of stacking the courtroom in our favor, what we really want are consistent judgments that are accurate and equitable.

Human judicial systems are far from this ideal, of course, so we can clearly see why noise is a problem. But how much of it can we realistically eliminate?

How Much Noise Is Too Much?

Because each of us operates with a different set of biases, decision-making is always going to be noisy to some degree. Consider also that mercy and compassion are inherently noisy influences, yet we wouldn’t want to live in a world without them. Throw in the fact that the human mind is just not capable of perfect consistency, and it becomes clear that completely noise-free decisions are beyond reach. Our best option is to evaluate the judgments we’re making, find the problematic influences, and try to control whatever we can control.

How do we evaluate judgments? Well, we can measure the accuracy of a weather forecast, for instance, by just comparing it to the actual temperature. But not all judgments can be verified this way. The answer, says Kahneman, is to do a “noise audit”—to evaluate the judgment process across a large number of cases, rather than looking at the outcome of any single case.

Evaluating the process instead of a single outcome works for such predictive judgments as weather forecasting, as well as for evaluative judgments such as sentencing criminals, rating restaurants or hiring employees. It’s also the best place to focus for improving our judgments.

Unfortunately, key decision-makers who could profit from learning how to improve their judgment often don’t see the problem as applying to them. In research situations, senior executives (no matter how experienced) cling doggedly to what they refer to as “intuition” or “gut feelings.” This raises a question, as Kahneman, Sibony and Sunstein comment with some acerbity: “What, exactly, do these people, who are blessed with the combination of authority and great self-confidence, hear from their gut?”

“Professionals seldom see a need to confront noise in their own judgments and in those of their colleagues.”

The reality is that in study after study, these gut feelings and intuitions impeded sound and accurate judgments rather than improving them. Rules and algorithms outdid human judges every time.

So why don’t human decision-makers use rules more often? Because by its very definition, a “gut feeling” feels right, and that feeling is its own reward. It carries with it, writes Kahneman, a sense that “all the pieces of the jigsaw puzzle seem to fit.” What often passes under our conscious radar, he adds, is that our gut has no compunction about “hiding or ignoring pieces of evidence that don’t fit.”

The emotional reward we get from making a gut-feeling judgment is so seductive that we eagerly reach for it, especially in situations where we don’t have enough facts to help us come to a decision, or when we’re trying to predict something that’s unpredictable. In these situations, we’re usually in denial of our own ignorance—and the more complete our ignorance, the more we’re forced to justify relying on gut feelings.

Noise-Canceling Strategies

Mechanical decisions are less noisy than human judgment, especially if there’s a lot of data involved, but machines make mistakes too. An algorithm that identifies and plays off race or gender could certainly render results that are noiseless but heavily biased.

But Kahneman and his colleagues aren’t looking to replace human judgment with mechanical prediction. It’s human judgment they’re looking to improve. That said, some of the same methods that are used to make rules and algorithms better for machines also make human judgment better.

“No one should doubt that it is possible—even easy—to create an algorithm that is noise-free but also racist, sexist, or otherwise biased.”

The first step is to realize that the error twins, bias and noise, are sneaky little characters. We can try to address bias by correcting for it after the judgment has been made (akin to adding two pounds to the results on a scale because we know that’s how far it’s off). We can also try to offset bias beforehand by nudging people in a certain direction. Automatic enrollments in pension plans, placing healthier foods in prominent places in grocery stores—these are examples of preventive debiasing.

Another form of preventive debiasing aims to educate people to recognize and overcome biases. This is a bit more straightforward. You’re inviting people to be part of the process instead of trying to force them down the right path (or the right aisle in the grocery store), but it’s still a complicated venture. “Decades of research have shown that professionals who have learned to avoid biases in their areas of expertise often struggle to apply what they have learned to different fields,” writes Kahneman. The challenge is to realize that the same biases appear in all kinds of situations.

An alternative to either preventing bias or controlling for it after the fact is the concept of looking for biases as they happen. For example, the sole function of one person on the decision-making team could be to observe how the decision is being made, using a checklist to assess in real time whether any biases may be derailing the process.

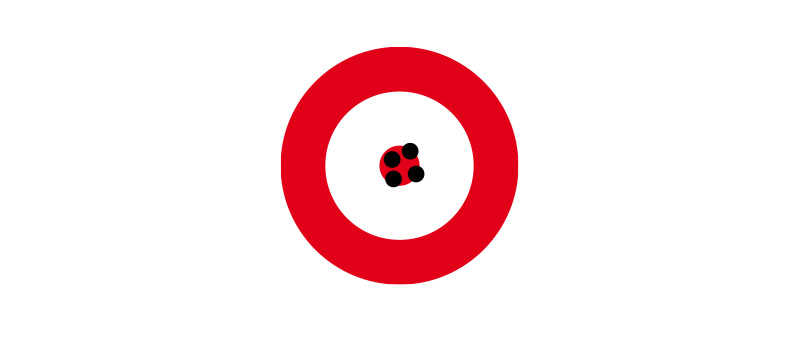

In Daniel Kahneman’s analogy, judgments and decisions that are as free of bias and noise as possible are depicted as hitting the bull’s-eye.

Adapted from Noise: A Flaw in Human Judgment by Daniel Kahneman, Olivier Sibony and Cass R. Sunstein

Six Principles of Decision Hygiene

There are certainly people who make better judges than others; they tend to be those who are actively open-minded—less susceptible to certain biases and more willing to entertain counterarguments. Yes, intelligence and skill do enter the mix, but the best judges are able to imagine how other competent judges might think in their place.

Nevertheless there are strategies that can help improve human judgment across the board. Hunting down sources of bias is one such strategy. In addition, Kahneman and his colleagues have identified six underlying principles of “decision hygiene” that are known to clean up noise:

1) Keep in mind that the goal of judgment is accuracy, not individual expression.

This first principle points to the fact that pattern noise is generally caused by the personality differences that make us each see problems differently. This is why it’s so important to have clear, simple rules, standards or structured guidelines that people can easily follow to bring judgments into closer agreement and reduce noise.

2) Think statistically and take the outside view of the case.

Instead of thinking of the case as a unique problem, think of it as representative of similar cases. This allows for appreciating insights from past outcomes and helps prevent overconfidence in our own judgments. “People cannot be faulted for failing to predict the unpredictable,” Kahneman acknowledges, “but they can be blamed for a lack of predictive humility.”

3) Structure judgments into several independent tasks.

Because the human brain is always on the lookout for patterns (even when there are none), we unconsciously try to make a coherent story out of the information we have, even if it means ignoring or distorting whatever doesn’t fit. We increase the accuracy of our judgments when we break a problem down into smaller tasks and address each one independently.

4) Resist premature intuitions.

Remember Damasio’s suggestion that we need that last nudge of intuition to help us settle on a decision? Kahneman and his colleagues don’t ban the use of intuition, but they agree that we must first carefully consider the evidence, duly following any available rules, standards or guidelines to ensure we haven’t been biased by information that isn’t relevant.

5) Obtain independent judgments from multiple judges, then consider aggregating those judgments.

You may have heard the proverb, “In a multitude of counselors there is safety.” But you don’t want them all in the same meeting room together while you present your problem. That would only add to the noise, since one person’s opinion can influence another’s. The better approach is to collect each judgment separately before bringing the judges together to come to a resolution. Adding the step of averaging out the judgments can help filter out any biases.

6) Favor relative judgments and relative scales.

We’re better at making comparative judgments than we are at making absolute ones. In other words, it’s easier to place something on a scale when we’re measuring it against other known cases. When we have an empty scale, with nothing to compare it with, a single case is much more difficult to place accurately.

The takeaway from all of this is that human judgment is noisy; it’s fraught with inconsistency and error. Yes, in an ideal world all judges would be of the same mind and judgment. In any situation where we’re being evaluated, we could be confident of being treated fairly, consistently, correctly. Biases wouldn’t matter, the weather wouldn’t matter, cultural differences wouldn’t matter; the same judgment would be reached, whatever the color of our skin, the neighborhood we’re from, or the mood of the judge.

The fact is, we don’t live in such a perfect world, and we’ll never be able to fully eliminate noise in human judgment. But applying these principles can vastly improve our performance in our own personal sphere.